Introduction

Qwen AI Statistics: Artificial intelligence is becoming a part of our daily lives, and new tools like Qwen AI and many others are making it easier to interact with technology. One such tool is Qwen AI, a modern language model designed to understand and generate human-like responses. It can assist with writing, problem-solving, coding, and even image analysis, making it useful for a wide range of users. This article on Qwen AI Statistics stands out for its ability to handle a range of tasks smoothly while remaining easy to use. It combines speed, accuracy, and flexibility in one system. As AI continues to grow, Qwen AI demonstrates how technology can support learning, creativity, and everyday work in a simple, effective way.

Editor’s Choice

- Alibaba Group Holding Limited (NYSE: BABA) is trading at USD 135.38 as of April 21, 2026.

- In April 2026, the stock remained about 29.61% below its recent peak.

- Alibaba Group does not separate Qwen revenue but expects around 40% YoY growth in AI products between 2026 and 2027.

- A January 2026 report by LinkedIn found that the Qwen AI platform had 31.05 million monthly active users.

- Qwen AI platform models had crossed 700 million total downloads.

- In 2025, Qwen AI usage was highest in Iraq (27.52%).

- More than 90,000 enterprises used the Qwen model family.

- By December 2025, the Qwen app quickly reached 18.34 million monthly active users within just two weeks.

- The Qwen AI platform recorded 42.19 million visits in March 2026.

- On qwen.ai, most traffic comes from desktops at 77.92%, while mobile devices contribute the remaining 22.08% of visits.

- Qwen-Max supports up to 32,768 tokens and costs USD 0.0016 per 1,000 input tokens and USD 0.0064 per 1,000 output tokens.

Qwen AI Overview

| Category | Details |

| Name | Qwen |

| Developer | Alibaba Cloud |

| First Release | First launched in April 2023. |

| Latest Versions | The most recent version, Qwen3.6-Max-Preview, was released on April 18, 2026. Other updates include Qwen3.6-35B-A3B (April 15, 2026), Qwen3.6-Plus (April 1, 2026), and Qwen3-Coder-Next (February 2, 2026). |

| Programming Language | Qwen is mainly built using Python. |

| Supported Platforms | It is available as a web application and also supports Android devices. |

| Type | Serves as a chatbot for various tasks. |

| License | It uses different licenses depending on the model version. |

| Code Repository | github.com/QwenLM/Qwen |

| Official Website | chat.qwen.ai |

Key Features Of Qwen AI

- Qwen AI can handle text, images, and audio.

- It helps with writing, translation, chatting, image understanding, and voice-to-text tasks.

- It supports long inputs (up to 128k tokens), making it useful for long documents and deep conversations.

- Qwen-Coder helps developers write, fix, and understand code easily.

- Qwen-Math is designed to solve advanced math problems with better accuracy.

- It supports over 119 languages and dialects.

- The model ranges from 0.5 billion to 235 billion parameters and uses an efficient MoE design.

- Many versions are open-source, allowing easy customization and flexible use.

Alibaba Stock Analysis

(Source: cloudinary.com)

- Alibaba Group Holding Limited (NYSE: BABA) is trading at USD 135.38 as of April 21, 2026.

- The stock is about 41.8% below the average Wall Street target price of USD 192.

- The share price experienced a maximum drawdown of 36.77% as of 7 April 2026.

- Even after a small rebound in April 2026, the stock remained about 29.61% below its recent peak.

- Alibaba trades about 30% below its USD 192.67 high, yet comfortably above USD 103.71.

- The company currently has a market value of around USD 314 billion with a P/E ratio of 25.02x.

- Alibaba is shifting from an e-commerce-focused business to a cloud and AI-driven platform.

Qwen AI Revenue Analysis

- Alibaba Group does not separate Qwen revenue but expects around 40% YoY growth in AI products between 2026 and 2027.

- AI contributed over 20% of Alibaba Cloud’s external revenue in early 2026.

- AI revenue has grown by more than 100% YoY for 10 straight quarters.

- In Q3 2026, revenue is projected to reach USD 39.8 billion with AI as a key growth driver.

Qwen AI User Statistics

- A January 2026 report by LinkedIn found that the Qwen AI platform recorded 31.05 million monthly active users across the app, web, and PC platforms.

- The Qwen app reached 166 million MAUs in China, with users interacting an average of 19.8 times per month.

- During the same campaign, Alibaba Group invested CNY 3 billion (about USD 432 million) in incentives to boost engagement.

- The platform processed 120 million orders in six days, showing very high user activity.

- Daily active users peaked at 73.52 million on 7 February 2026, up from 7.07 million, a 940% increase.

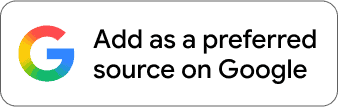

By Country

(Source: grabon.in)

- As of 2025, Qwen AI usage remained the highest in Iraq, at 27.52%.

- After Iran, the user shares were followed by Brazil (19.08%), Turkey (12.10%), and Russia (10.60%).

- Meanwhile, the United States contributed for 6.15% of total users.

Qwen AI Core Usage

- The Wearetenet report also mentioned that the Qwen AI platform models had crossed 700 million total downloads on Hugging Face by January 2026.

- In December 2025, Qwen downloads exceeded the combined total of the next 8 major model families, showing strong market leadership.

- Over 2.2 million corporate users access Qwen through DingTalk.

- More than 90,000 enterprises were using Qwen services in 2025.

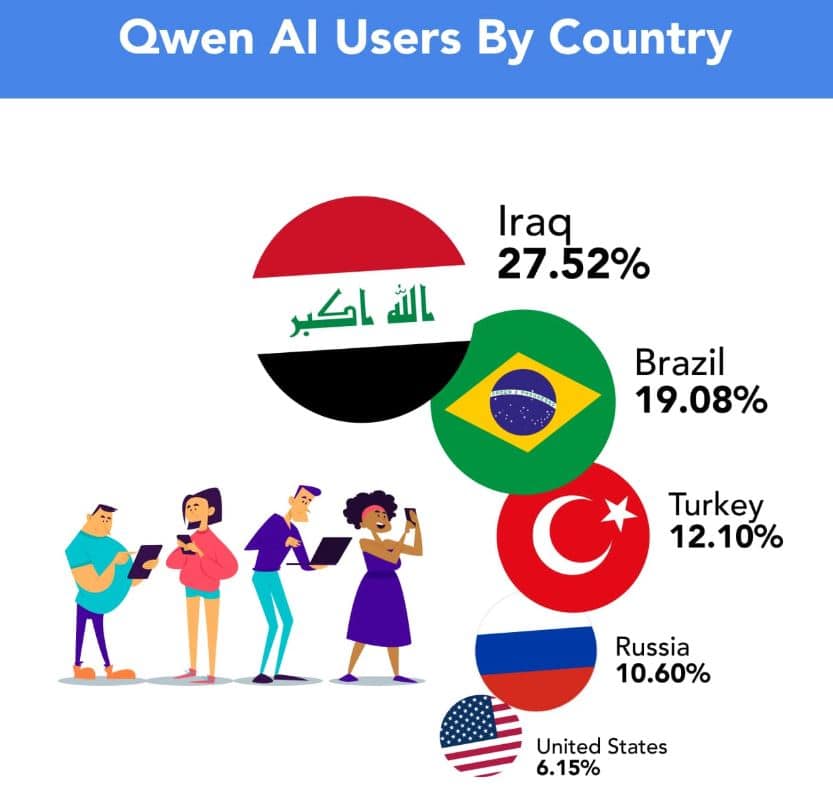

Qwen AI Adoption Statistics

(Reference: wearetenet.com)

- Qwen AI’s adoption level in October 2025 was set as the baseline at 100%.

- By November 2025, Qwen AI’s adoption increased to 249%, meaning usage was roughly 2.5 times higher than in October.

Qwen AI 2.5 Adoption

- According to Grabon, Qwen’s AI services have reached more than 2.2+ million corporate users via DingTalk, Alibaba’s workplace collaboration platform.

- In 2025, more than 90,000 enterprises adopted the Qwen model family.

- Qwen AI 2.5 supports 29+ languages, and the open-source Qwen lineup spans 0.5-110 billion parameters.

- The newest Qwen 2.5 models are pre-trained on a refreshed dataset of up to 18 trillion tokens.

- Qwen2.5-Coder is trained on 5.5 trillion tokens and covers 92+ programming languages.

Qwen App Statistics

(Source: awisee.com)

- By December 2025, the Qwen app quickly reached 18.34 million monthly active users within just two weeks.

- It recorded a strong 149% month-over-month growth.

- Globally, it ranked #24 among AI apps.

- On the consumer side, the app reported around 30 million monthly active users.

- For enterprise use, over 90,000 businesses adopted it through Alibaba Cloud and DingTalk.

- Most users access Qwen via mobile web (65.95%), while 34.05% use desktop.

- Additionally, more than 2.2 million users connect through DingTalk.

Qwen AI Website Traffic Statistics

- The Qwen AI platform recorded 42.19 million visits in March 2026.

- Meanwhile, the website traffic increased by 34.82% as compared to February.

- The average session duration reached 10 minutes and 35 seconds, and users viewed about 4.43 pages per visit.

- Meanwhile, the bounce rate stood at 39.88%.

By Country & Device Usage

- On qwen.ai, most traffic comes from desktops at 77.92%, while mobile devices contribute the remaining 22.08% of visits, according to Semrush.

| Country | Total Traffic Share | Visits(million) | Desktop Usage | Mobile Usage |

| Russian Federation | 21.98% | 9.27 | 95.25% | 4.75% |

| India | 11.18% | 4.71 | 28.45% | 71.55% |

| United States | 9.38% | 3.96 | 78.53% | 21.47% |

| China | 5.98% | 2.52 | 100% | 0% |

| Brazil | 4.32% | 1.82 | 76.23% | 23.77% |

Recent Released Qwen Models

| Release Date | Model/version | Type/license | Key numeric information |

| September 2025 | Qwen3‑Max | Proprietary flagship LLM | Dense frontier‑scale model; positioned above earlier Qwen3‑235B model; competitive with top‑tier frontier models on coding, math, and reasoning benchmarks (exact parameter count not publicly disclosed, but described as “frontier‑scale”). |

| Qwen3‑Next | Open‑source LLM (Apache 2.0) | Efficiency‑optimized successor in the Qwen3 family; trained on trillions of tokens with improved training/inference efficiency versus Qwen3, targeting lower compute per token at similar quality. | |

| Qwen3‑Omni | Open‑source multimodal model (Apache 2.0) | Unified text‑and‑vision model; built on Qwen3 architecture; supports multiple modalities in a single model with benchmark scores close to proprietary frontier multimodal systems. | |

| Qwen3‑VL | Open‑source vision‑language model (Apache 2.0) | Vision‑language model optimized for image understanding and OCR‑style tasks; trained on trillions of multimodal tokens; positioned as successor to Qwen‑VL‑Max. | |

| Qwen‑Image‑Edit‑2509 | Open‑source image‑editing model | Built on a 20 billion-parameter Qwen‑Image base; provides high‑fidelity image editing with resolution up to at least 1024×1024 and supports mask‑based editing workflows. | |

| Qwen3‑TTS‑Flash | Speech model | High‑speed text‑to‑speech model; optimized for low‑latency generation (sub‑second response on consumer GPUs) while maintaining natural prosody; exact parameter count not disclosed. | |

| February 2026 | Qwen3‑Coder‑Next | Open‑source code model (Apache 2.0) | Next‑generation coding model in the Qwen3 family; designed for competitive‑level code generation across multiple languages; parameter sizes not fully disclosed, but built as a successor to Qwen3‑Coder‑480B‑A35B and Qwen3‑Coder‑30B‑A3B. |

| 16 February 2026 | Qwen3.5 (family) | Open‑weights LLMs (Apache 2.0) | Flagship model Qwen3.5‑397B‑A17B with 397B total parameters and 17B active parameters, using sparse MoE; supports 256K context window and 201 languages; released as commercially usable open weights. |

| Qwen3.5‑Plus | Proprietary enhanced LLM | Paid, higher‑capability version of Qwen3.5 exposed via Qwen chat and Alibaba Cloud APIs; same 256K context tier with improved reasoning and tool‑use; parameters not disclosed publicly. | |

| Early April 2026 | Qwen3.5‑Omni | Proprietary multimodal model | Closed‑source multimodal successor to Qwen3‑Omni; supports long‑context text plus images, audio, and video; designed as a premium tier for paid Qwen and Alibaba Cloud customers; context window up to 1 million tokens reported in coverage. |

| Qwen3.6‑Plus | Proprietary flagship LLM | New flagship “agentic” LLM; reports up to 1 million token context window by default and significantly higher coding/agent benchmarks than Qwen3.5‑Plus; third proprietary model launched that week. | |

| April 2026 | Qwen3.6‑35B‑A3B | Open‑source LLM (Apache 2.0) | 35 billion active‑parameter sparse MoE model in Qwen3.6 line; optimized for local and enterprise deployment with better efficiency than earlier dense 30B models; open‑weights under Apache 2.0. |

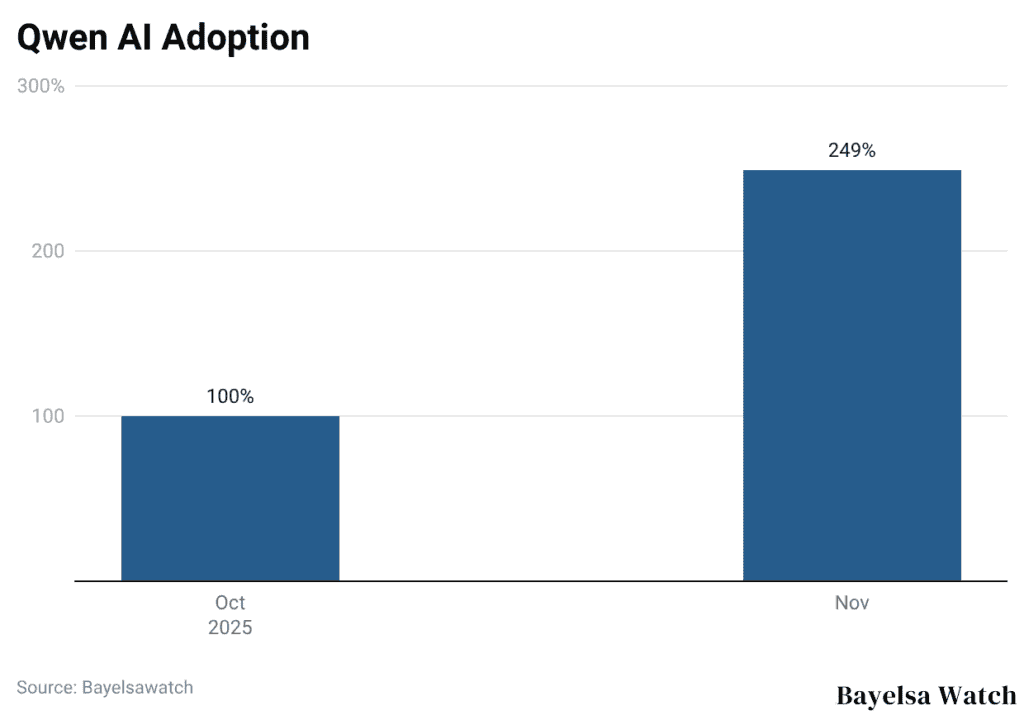

Qwen AI Models Pricing Statistics

(Source: godofprompt.ai)

- Qwen-Max supports up to 32,768 tokens and costs USD 0.0016 per 1,000 input tokens and USD 0.0064 per 1,000 output tokens.

- Qwen-Plus handles up to 131,072 tokens, priced at USD 0.0004 per 1,000 input tokens and USD 0.0012 per 1,000 output tokens.

- For maximum efficiency, Qwen-Turbo allows up to 1,000,000 tokens and costs USD 0.00005 per 1,000 input tokens and USD 0.0002 per 1,000 output tokens.

Qwen AI Paid Access & Pricing Structure

| Model/Plan | Input Cost (per 1million tokens) | Output Cost (per 1 million tokens) |

| Qwen Plus (API level 2026) | USD 0.26 | USD 0.78 |

| Qwen2.5-7B-Instruct | USD 0.03 | USD 0.03 |

| Qwen2-57B-A14B | USD 0.16 | USD 0.16 |

| Qwen-Max (Enterprise) | USD 1.60 | USD 6.40 |

| Qwen-Plus (Cloud Studio) | USD 0.40 | USD 1.20 |

| Qwen-Turbo | USD 0.10 | USD 0.30 |

Qwen AI Benchmark Performance Analysis

| Benchmark/Task | Qwen Model, 2026 | Score/Result |

| HumanEval (coding) | Qwen2.5-Coder | 92% |

| SWE-bench Pro | Qwen3.6-Max-Preview | 58.4% |

| Terminal-Bench 2.0 | Qwen3.6-Max-Preview | 65.4% |

| SkillsBench | Qwen3.6-Max-Preview | +9.9 improvement |

| SciCode | Qwen3.6-Max-Preview | +10.8 improvement |

| NL2Repo | Qwen3.6-Max-Preview | +5.0 improvement |

Pros And Cons Of Qwen AI

Pros

- It supports text, images, and audio, making it a multimodal AI system.

- It can handle long inputs up to 128k tokens for better document analysis.

- It performs well in coding and math tasks through Qwen-Coder and Qwen-Math.

- It supports 119+ languages for global users.

- It scales efficiently from small to very large models using MoE design.

Cons

- Smaller Qwen models sometimes perform weaker than the top competitors.

- Many high-end models are costlier to run.

- Enterprise use requires strong safety controls.

- Global use depends on regional rules and compliance.

- Open-source flexibility requires ongoing maintenance and governance.

Conclusion

In conclusion, Qwen is quickly moving from a research model to a real-world AI tool. Its performance, language ability, and test results are improving over time. More users, downloads, and community support show strong growth. It is now widely used for coding, translation, and task-based work. With new versions being released, the main focus is on performance, cost per token, and safe use at scale in daily applications.

FAQ

Qwen AI processes text better but still lacks human creativity, empathy, and deep emotional understanding in complex tasks.

Qwen AI offers modern protections but still has notable privacy and security concerns; avoid sharing sensitive data.

Qwen Image effectively generates photorealistic portraits, digital art, and complex compositions.

The Qwen AI platform offers free text-to-video generation via the Qwen AI Video Generator, including the 2.5 Max version for fast online results.

ChatGPT shines at general conversation and coding, while Qwen excels in multilingual, open-source, enterprise-style workflows.