Introduction

AI chips Statistics: AI chips are specialized hardware accelerators engineered to execute complex machine learning (ML) and neural network workloads with high throughput and energy efficiency. They help power smart devices, machine learning, and data processing. As AI is used more in daily life and business, the demand for these chips is growing fast. This article shares simple statistics and key trends in the AI chips market. It examines growth, usage, and future scope, showing how new technologies and rising data needs are boosting this fast-growing industry.

Editor’s Choice

- In 2026, the total market size of AI Chip is projected to reach approximately USD 51 billion.

- CPUs held the largest share of the global AI chip market in 2025 at 29%, followed closely by GPUs at 26 % and NPUs at 17%.

- According to SQ Magazine, data centers remained the largest consumers of AI chips, accounting for approximately 52% of all units sold globally in 2025.

- A report published by Electroiq found that in 2022, when leaders like NVIDIA and Google lost over USD 400 billion in market value, the 2025-2026 AI super-cycle has since pushed valuations to record highs. Amazon lost about USD 270 billion, and AMD lost around USD 30 billion.

- According to Knowledge Sourcing Intelligence, in Q3 of fiscal year 2026, NVIDIA reported total revenue of approximately USD 57 billion, with USD 51.2 billion from data center sales, representing a 66% increase from the previous year.

- Global Growth Insights stated that North America remained the largest AI chip market, accounting for approximately 38% of global demand, equivalent to about USD 5.47 billion.

- According to Global Growth Insights, about 63% of semiconductor investors prioritized AI-focused chips over traditional processors in recent years.

- The U.S. government is supporting the AI chip industry through the CHIPS Act and export controls, with approximately USD 52.7 billion in direct funding, USD 24 billion in tax credits, and additional ecosystem support in 2026, as per Wikipedia.

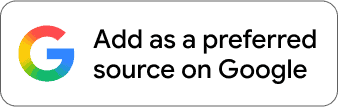

AI Chips Market Size

(Source: scoop.market.us)

- In 2026, the total market size of AI Chip is projected to reach approximately USD 51 billion.

- By 2033, the market is forecast to expand significantly to around USD 341 billion, with a CAGR of 31.2% from 2024 to 2033.

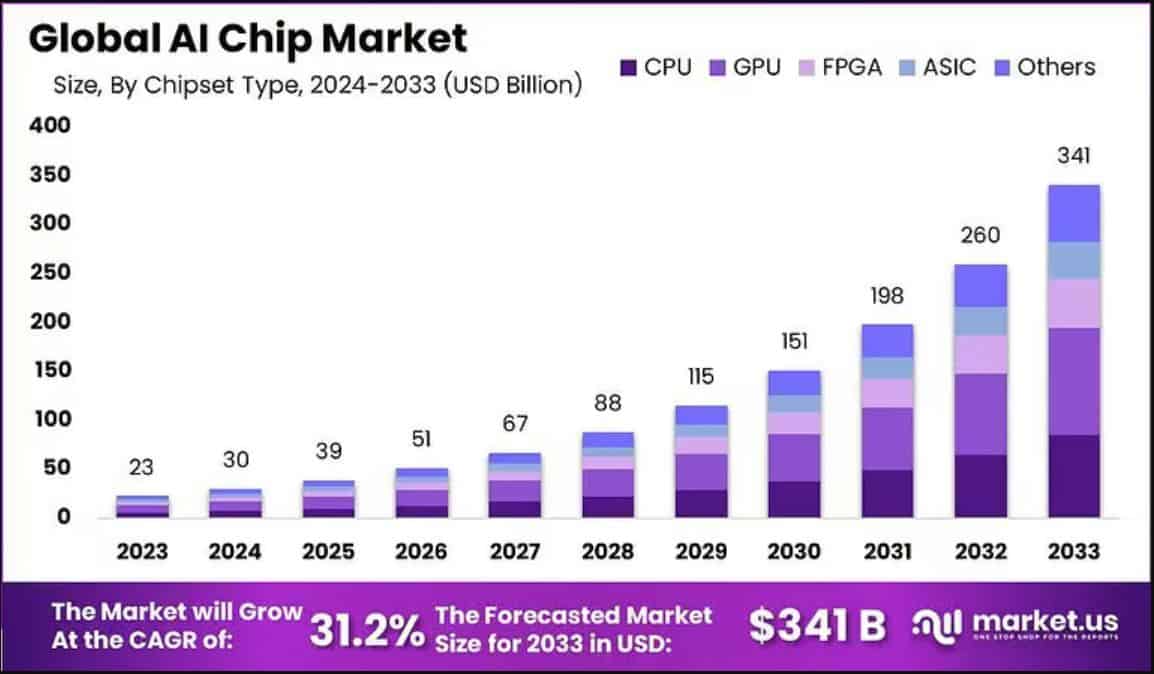

AI Chip Market Share By Chip Type

(Reference: omrglobal.com)

- CPUs held the largest share of the global AI chip market in 2025 at 29%, followed closely by GPUs at 26 % and NPUs at 17%.

- Together, these three chip types accounted for nearly 75% of total market revenue.

- ASICs captured a significant 16% of the AI chip market in 2025, making them the fastest-growing architecture.

- FPGAs, while useful for specialized tasks, held a smaller share of just 7%.

AI Chip Usage By Application

- According to SQ Magazine, data centers remained the largest consumers of AI chips, accounting for approximately 52% of all units sold globally in 2025.

- AI chips in edge devices, including smart cameras, wearables, and industrial IoT, reached USD 14.1 billion in value.

- The automotive AI chip market, driven by advanced driver-assistance systems (ADAS), grew to USD 6.3 billion.

- Healthcare applications, including diagnostics and medical imaging, generated USD 2.2 billion in AI chip demand.

- Smartphones with neural processing units (NPUs) shipped over 980 million units worldwide.

- Moreover, AI chips in smart home devices accounted for USD 1.4 billion, robotics and automation systems for USD 2.6 billion, retail analytics platforms for USD 850 million, drones and uncrewed vehicles for USD 1.1 billion, and the gaming industry for USD 3.9 billion.

AI Chip Statistics By Key Players And Specifications

NVIDIA Current AI Chips

- H100 SXM has 80GB HBM3, 3.35 TB/s bandwidth, 2 PFLOPS FP8, consumes 700 W, and is priced around 25-30K USD.

- H200 SXM features 141GB HBM3e, 4.8 TB/s bandwidth, 2 PFLOPS FP8, 700 W, costing 30-40K USD.

- B200 (Blackwell) delivers 192GB HBM3e, 8 TB/s bandwidth, 4.5 PFLOPS FP8, 1000 W, priced 45-50K USD. It has 208 billion transistors, fifth-generation Tensor Cores, and 20 PFLOPS of FP4 with sparsity, offering 5× the inference performance of the H100.

- The GB200 NVL72 system combines 72 Blackwell GPUs and 36 Grace CPUs per rack, delivering 30× the inference performance of H100 systems, costing about 3M USD per rack, and requiring liquid cooling.

AMD AI Chips

- MI300X: 192GB HBM3, 5.3 TB/s, 2.6 PFLOPS, shipping.

- MI350X: 288GB HBM3e, 8 TB/s, 4.6 PFLOPS, expected June 2025.

- MI355X: 288GB HBM3e, 8 TB/s, 9.2 PFLOPS FP6, 2025.

- MI400 (Helios): 432GB HBM4, 19.6 TB/s, 40 PFLOPS FP4, 2026. MI350/MI400 claim up to 1.6× more memory than B200 and 20%-30 % faster inference on Llama/DeepSeek models.

- AMD currently holds 8% of the discrete AI GPU market and is expected to grow as adoption of ROCm 7 increases.

Hyperscaler AI Chips

- Google TPUs: v5e 197 TFLOPS, 16GB HBM; v5p 459 TFLOPS, 95GB HBM; v6e 918 TFLOPS, 32GB HBM; v7 (Ironwood) 2,300 TFLOPS, HBM3e, approaching GB200 performance. Anthropic plans >1 million v7 chips in 2026, requiring over 1 GW of power.

- AWS Trainium: Trainium2 96GB HBM3, 2.8 TB/s, 1.26 PFLOPS; Trainium3 144GB HBM3e, 4.9 TB/s, 2.52 PFLOPS; Trainium4 introduces NVLink Fusion, enabling NVIDIA/Trainium hybrid clusters.

- Microsoft Maia 100: 64GB HBM2e, 1.8 TB/s, 3 POPS at 6-bit precision, early testing on Bing, GitHub Copilot, and ChatGPT 3.5.

Specialized Inference Chips

- Cerebras WSE-3: 5 nm, 900K cores, 44GB SRAM, 125 PFLOPS, 21 PB/s bandwidth, largest chip ever built. May 2025 benchmarks beat Blackwell on Llama 4 inference.

- Groq LPU: Deterministic inference, 300 tokens/sec on Llama 2 70B, 10 times energy efficiency versus GPUs; acquired by NVIDIA in December 2025.

- SambaNova RDU: Efficient inference; 4 times better performance per joule versus Blackwell; 10 kW rack versus 140 kW for NVIDIA equivalent.

China AI Chips

- Huawei Ascend 910B: SMIC 7 nm N+1, 64GB HBM2e, 1.6 TB/s, 320 TFLOPS FP16, shipping.

- Ascend 910C: SMIC 7 nm N+2, 128GB HBM3, 3.2 TB/s, 800 TFLOPS FP16, shipping. Achieves around 60 % of H100 inference performance.

- 2025 production: About 1 million 910C chips at 60%-70 % lower cost than equivalent H100 setups. Long-term reliability and yields (30 %) remain challenges.

Company Performance And Global Supply Chain Trends

- A report published by Electroiq found that in 2022, when leaders like NVIDIA and Google lost over USD 400 billion in market value, the 2025-2026 AI super-cycle has since pushed valuations to record highs. Amazon lost about USD 270 billion, and AMD lost around USD 30 billion.

- NVIDIA generated around USD 49 billion in AI-related revenue in 2025, about 39% higher than in 2024, underscoring its leadership in AI accelerator technology.

- AMD earned approximately USD 5.6 billion from its AI chip business in 2025, expanding its share in the data center market.

- Intel’s Gaudi 3 AI accelerator captured around 8.7% of the AI training market in 2025, showing growing adoption among enterprise customers.

- Google’s TPU hardware business is estimated to have reached USD 3.1 billion in 2025, maintaining its position in AI infrastructure.

- Apple’s new A19 Bionic chip delivers 35 TOPS of AI performance, enabling advanced on-device machine learning tasks.

- Qualcomm shipped more than 800 million AI-enabled IoT chips in 2025, reinforcing its footprint in connected devices and edge AI applications.

- sqmagazine.co.uk further reported that Amazon’s custom Trainium2 and Inferentia2 chips handled around 35% of new AI workloads on AWS in 2025.

- Major chip foundry Taiwan Semiconductor Manufacturing Company (TSMC) allocated over 28 % of its wafer production capacity to AI chip manufacturing.

- Revenue from AI chip packaging grew to roughly USD 4.7 billion as advanced 2.5D and 3D stacking technologies were widely adopted.

- Improved inventory practices shortened average AI chip lead times to about 12 weeks, while assembly and packaging technologies, such as chip‑on‑board, grew by nearly 38%, particularly in automotive and wearable electronics.

- Shipments of advanced EUV lithography systems reached 57 units, helping leading foundries meet aggressive sub‑5 nm production targets across the industry.

Recent Company Updates

- According to Knowledge Sourcing Intelligence, NVIDIA reported total revenue of approximately USD 57 billion in Q3 of fiscal year 2026, with USD 51.2 billion from data center sales, representing a 66% increase from the previous year.

- In November 2025, AMD projected 35% annual growth across its entire business and 60% annual growth in its data center segment over the next 3-5 years, driven by AI chip demand and a multiyear partnership with OpenAI, as shared at its November 2025 analyst day.

- Google’s TPU hardware is increasingly seen as a strong competitor to NVIDIA GPUs in AI workloads.

- Meta plans to spend billions on Google TPUs for its data centers starting in 2027.

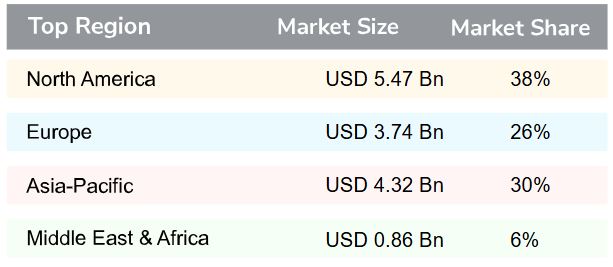

AI Chip Market Statistics By Region, 2026

(Source: globalgrowthinsights.com)

- Global Growth Insights stated that North America remained the largest AI chip market, accounting for approximately 38% of global demand, equivalent to about USD 5.47 billion. Cloud AI workloads accounted for nearly 46% of regional usage, while healthcare and defense accounted for about 29%.

- Europe accounted for roughly 26% of the AI chip market, or USD 3.74 billion.

- Industrial automation and automotive applications drove growth, with smart factories contributing 34% of usage and energy-efficient AI solutions about 31%.

- Asia-Pacific accounted for around 30% of the global AI chip market, valued at USD 4.32 billion. Consumer electronics accounted for 42% of usage, while smart cities and telecom accounted for nearly 36%.

- The Middle East & Africa will account for about 6% of global AI chip demand, approximately USD 0.86 billion, led by smart governance and surveillance (39%) and industrial AI (28%).

Global AI Chip Investments And Government Policies

- According to Global Growth Insights, about 63% of semiconductor investors prioritized AI-focused chips over traditional processors in recent years.

- Nearly 48% of capital went into advanced manufacturing to improve performance efficiency.

- Meanwhile, venture-backed innovations accounted for 29% of new AI chip designs, particularly in edge and low-power applications.

- Strategic partnerships influenced 52 % of long-term investment decisions.

- 44% of industry participants expanded fabrication capacity to support rising AI workloads.

By Country

- The U.S. government is supporting the AI chip industry through the CHIPS Act and export controls, with approximately USD 52.7 billion in direct funding, USD 24 billion in tax credits, and additional ecosystem support in 2026, as per Wikipedia.

- China promotes domestic AI chip development via the “Big Fund” and other industrial plans, expecting around USD 12.7 billion in public funding, with potential incentives totaling up to USD 70 billion.

- According to sciencebusiness.net, the EU’s Chips Act combines public and private funding, with total investment projected to exceed USD 85 billion in 2026.

- South Korea supports AI chip production through the K‑CHIPS program and private corporate investments, with government funding of USD 3.1 billion for a new foundry and roughly USD 73 billion from Samsung and others in R&D and facilities.

- Tom’s Hardware further stated that Japan provides public R&D support and targeted chip-production funds totaling around USD 10 billion, including USD 1.6 billion for semiconductor-specific projects.

- Bloomberg also reported that India is establishing a semiconductor fund to boost chip manufacturing and design, with approximately USD 11 billion in investments planned for 2026.

New Product Development

- About 57% of the new AI chips were designed to handle multiple tasks simultaneously, improving parallel processing.

- Nearly 49% focused on saving energy and reducing heat to manage power better.

- Around 46% of the chips were made for edge devices, allowing real-time AI processing outside of data centers.

- Multi-core and mixed-architecture designs accounted for 38% of new chip innovations.

- About 34% of the new chips were designed to work well with existing software, helping companies adopt them more quickly in business and industrial applications.

AI Chip Memory Technology Analysis

- On-chip memory offers very high speed and efficiency, although it has limited capacity. For example, Cerebras achieves an impressive 9 petabytes/sec memory bandwidth and 18GB of on-chip memory, supporting 400,000 AI-focused cores.

- High Bandwidth Memory (HBM2) is widely preferred in supercomputing, including AMD’s Radeon RX Vega 56, NVIDIA’s Tesla V100, Fujitsu’s A64FX processor with 4 HBM2 DRAMs, and NEC’s Vector Engine Processor with 6 HBM2 DRAMs.

- Graphics DDR memory provides AI system designers with high bandwidth and benefits from established manufacturing methods similar to traditional DDR memory, backed by over 20 years of proven use.

- Comparing HBM2 and GDDR6: HBM2 is more efficient in power and space.

- GDDR6 consumes 3.5-4 times more power on the SoC PHY and occupies 1.5-1.75 times more PHY area than HBM2.

- Overall, HBM2 is preferred where power efficiency and compact design are critical, while GDDR6 remains useful for high-bandwidth applications with mature production methods.

Conclusion

In conclusion, AI chips are important for modern technology. More industries are adopting AI, so demand for these chips is growing rapidly. Market data shows strong growth and high interest. As technology improves, AI chips will become faster, better, and more affordable. This shows a bright future, making AI chips key to innovations and digital growth.

FAQ

An AI chip is used to process data, run machine learning, and power smart technologies quickly.

AI chips usually last for 3 to 5 years, depending on usage and cooling conditions.

Elon Musk’s AI chip, Dojo, powers Tesla’s AI training for self-driving technology and automation systems.